2 Minute Medicine Rewind March 14, 2016

Survival Benefit with Kidney Transplants from HLA-Incompatible Live Donors

Many patients in the United States with anti-HLA antibodies remain on kidney transplantation waiting lists for long periods. A report from a single center found a survival benefit from receiving a kidney transplant from a HLA-incompatible live donor compared with remaining on the waiting list. The purpose of this study to further investigate this finding. In this multicenter study, the authors estimated the survival benefit from HLA-incompatible live donors matched to controls who remained on the wait list or got a transplant from a deceased donor and controls who remained on the waiting list but did not receive a transplant. The authors found that incompatible live donors had a higher survival rate than either control group at 1 year (95.0%, vs. 94.0% for the waiting-list-or- transplant control group and 89.6% for the waiting-list-only control group), 3 years (91.7% vs. 83.6% and 72.7%, respectively), 5 years (86.0% vs. 74.4% and 59.2%), and 8 years (76.5% vs. 62.9% and 43.9%) (p<0.001 for all comparisons). There were absolute increases in the 8-year survival rate of 13.6 and 32.6 percentage points as compared with the respective rates among controls who remained on the waiting list or received a transplant from a deceased donor (risk of death reduced by a factor of 1.83) and controls who remained on the waiting list and did not receive a transplant from a deceased donor (risk of death reduced by a factor of 3.37). This survival benefit was seen across all donor- specific antibody levels. Therefore, the results of this study provide further evidence that there is a survival benefit from HLA-incompatible live donors compared to staying on the waiting list or waiting for transplants from deceased donors.

Relationship Among Body Fat Percentage, Body Mass Index, and All-Cause Mortality: A Cohort Study

The purpose of this observational study was to assess the relationship of body mass index (BMI) and body fat percentage with all-cause mortality in a large Canadian population cohort of middle-aged and older males and females from 1999 to 2013. Body mass index was divided into quintiles, with quintile 1 as the lowest and quintile 5 as the highest. The authors hypothesized that greater body fat percentage (rather than BMI) would be independently associated with increased mortality. They found in their mortality models that both a low BMI (HR 1.44 [95% CI, 1.30 to 1.59] for quintile 1 and 1.12 [CI, 1.02 to 1.23] for quintile 2) and high body fat percentage (HR, 1.19 [CI, 1.08 to 1.32] for quintile 5) were associated with higher mortality in women. Comparably, in men a low BMI (HR, 1.45 [CI, 1.17 to 1.79] for quintile 1) and high body fat percentage (HR, 1.59 [CI, 1.28 to 1.96] for quintile 5) were also associated with increased mortality. Therefore, the results of this study add clarification that a low BMI and high body fat percentage are independently associated with an increased mortality. It also highlights the importance of using direct measures of adiposity when estimating prognosis.

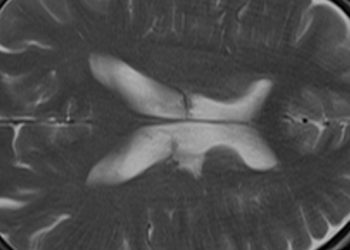

Cocaine Use and Risk of Ischemic Stroke in Young Adults

There is a known association between cocaine use and ischemic stroke, however the association by timing, route and frequency has not been fully examined. The authors conducted a population-based case-control study to examine the relationship between cocaine use and ischemic stroke between the ages of 15 and 49 years, with a particular focus to the effects of timing and route of cocaine use. The authors found that acute cocaine use in the previous 24 hours was strongly associated with an increased risk of stroke (age–sex–race adjusted odds ratio, 6.4; 95% CI 2.2–18.6). The route of administration had differential impacts, as the smoking route had an adjusted odds ratio of 7.9, whereas the inhalation route had a decreased adjusted odds ratio of 3.5. The odds ratio for acute cocaine use by any route was 5.7 (after controlling for current alcohol, smoking use, and hypertension). In contrast to the acute use of cocaine, chronic use (months or years ago) was not associated with an increased risk of stroke. The results of this large case-controlled study show the risk of ischemic stroke after acute cocaine use, which is a 5.7-fold increase.

Inadequate vitamin D has been identified as a potential risk factor for developing multiple sclerosis (MS). It remains unclear whether adequate maternal vitamin D levels during pregnancy are associated with risk of MS in the offspring. The authors conducted a prospective case control study. They identified 193 individuals with a diagnosis of MS before December 31, 2009, whose mothers were in the Finnish Maternity Cohort and had an available serum sample from the pregnancy with the affected child. They then matched these cases with 326 controls. The primary outcome was risk of MS among the offspring and association with maternal vitamin D levels. The authors found the mean (SD) maternal vitamin D levels were higher in maternal control than case samples (both were in the insufficient vitamin D range) (15.02 [6.41] ng/mL vs 13.86 [5.49] ng/mL). In terms of the primary outcome, maternal vitamin D deficiency during early pregnancy was associated with a nearly 2-fold increased risk of MS in the offspring (relative risk, 1.90; 95% CI, 1.20-3.01; p = .006) compared with women who did not have deficient vitamin D levels. There was no statistically significant association between the risk of MS and increasing serum vitamin D levels (p = .12). The results of this study add support to the association between vitamin D deficiency early in pregnancy and increased risk of developing MS as an adult, however, the study does not provide any information as to whether there is a dose-response effect with higher levels of vitamin D. Further studies are needed.

Modifiable Neighborhood Features Associated With Adolescent Homicide

Youth violence requires research that addresses individual, family, community, and society level risk factors. To date little research has been conducted investigating the association between environmental neighborhood features, such as streets, buildings, and natural surroundings and severe violent injury among youth. This will help identify targets for future environmental interventions. The authors conducted a population based case-control study in Philadelphia. They identified adolescents from 2010 to 2012 who died by homicide between the ages of 13 to 20 years while residing in Philadelphia. To recruit controls at the time of each index case participant’s homicide, the authors used incidence-density sampling and random-digit dialing. The authors found the presence of street lighting (odds ratio [OR], 0.24; 95% CI, 0.09-0.70), illuminated walk/don’t walk signs (OR, 0.16; 95% CI, 0.03-0.92), painted marked crosswalks (OR, 0.17; 95% CI, 0.04-0.63), public transportation (OR, 0.13; 95% CI, 0.03-0.49), parks (OR, 0.09; 95% CI, 0.01-0.88), and maintained vacant lots (OR, 0.17; 95% CI, 0.03-0.81) were significantly associated with decreased odds of homicide. Environmental factors that increased odds of homicide included stop signs (OR, 4.34; 95% CI, 1.40-13.45), security bars/gratings on houses (OR, 9.23; 95% CI, 2.45-34.80), and private bushes/plantings (OR, 3.44; 95% CI, 1.18-10.01). The results of this study identify modifiable environmental features that are potential targets for future randomized intervention trials in order to make neighborhoods safer in an effort to reduce youth violence.

Image: PD

©2016 2 Minute Medicine, Inc. All rights reserved. No works may be reproduced without expressed written consent from 2 Minute Medicine, Inc. Inquire about licensing here. No article should be construed as medical advice and is not intended as such by the authors or by 2 Minute Medicine, Inc.